👨🎓 Education

I am a Ph.D. candidate (2022–) at the College of Informatics, Huazhong Agricultural University, supervised by Prof. Hong Chen. Previously, I was pursuing the M.S. degree (2020-2022) with the assistance of Prof. Lingjuan Wu. I received the B.S. degree in Engineering from China Agricultural University in 2020.

🔬 Research Area

My research interests lie in the areas of optimization and learning theory, with emphasis on the following topics:

-

Automatic machine learning/LLM (e.g., Bilevel Optimization, Multi-Agent Communication)

-

Robust/Interpretable machine learning (e.g., Robust Metric, Sparse/Neural Additive Models)

-

Applications (e.g., Hardware Trojan Detection, Intelligent Biology Analysis)

Some suggested videos for better understanding the bilevel optimization, robust machine learning, interpretable additive models as well as the hardware Trojans.

If you are interested or have any questions about my work, please feel free to contact me: xlinml@163.com

🔥 News

-

2026.05: 🎉🎉 New Acceptance: Three papers on Interpretable ML/LLM Evaluation/GUI Agents to appear in ICML (ccf-A).

-

2026.04: 🎉🎉 New Acceptance: One paper on GUI Agents to appear in ACL Findings.

-

2026.04: 🎉🎉 New Acceptance: One paper on hardware security to appear in Computer & Security (ccf-B).

-

2026.01: 🎉🎉 New Acceptance: Two papers on interpretability to appear in Information Sciences (ccf-B) and ICASSP (ccf-B).

-

2026.01: 🎉🎉 New Awards: Two papers are selected as “Best Presentation in Session” in ICPADS.

-

2024.11: 🎉🎉 New Award: China Doctoral National Scholarship.

📝 Publications in Machine Learning

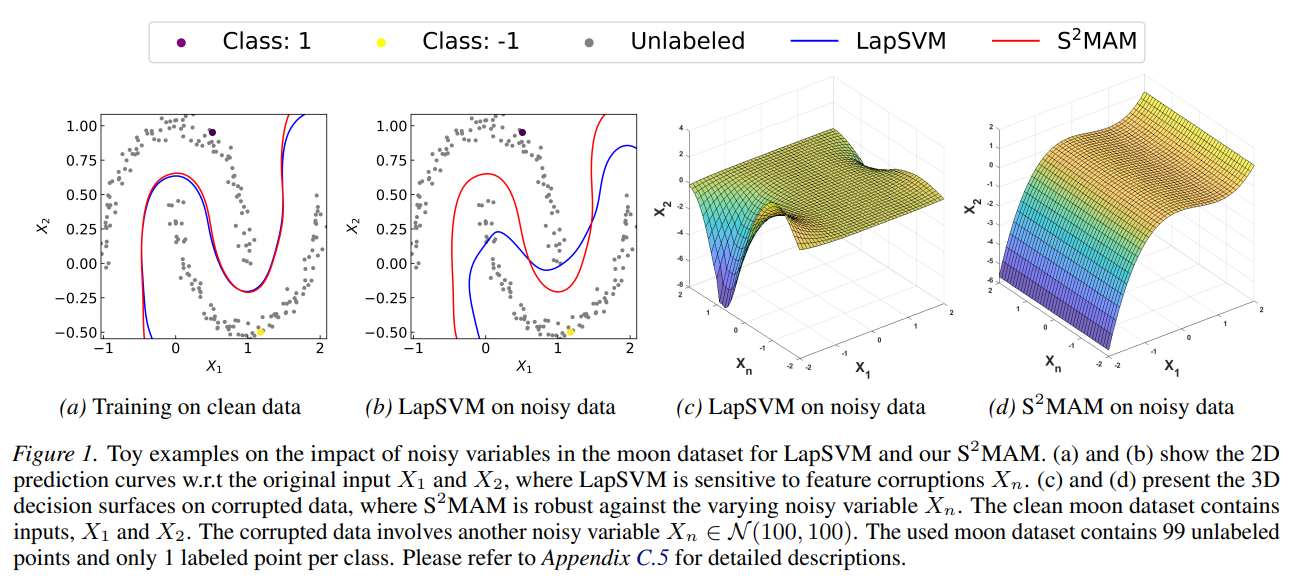

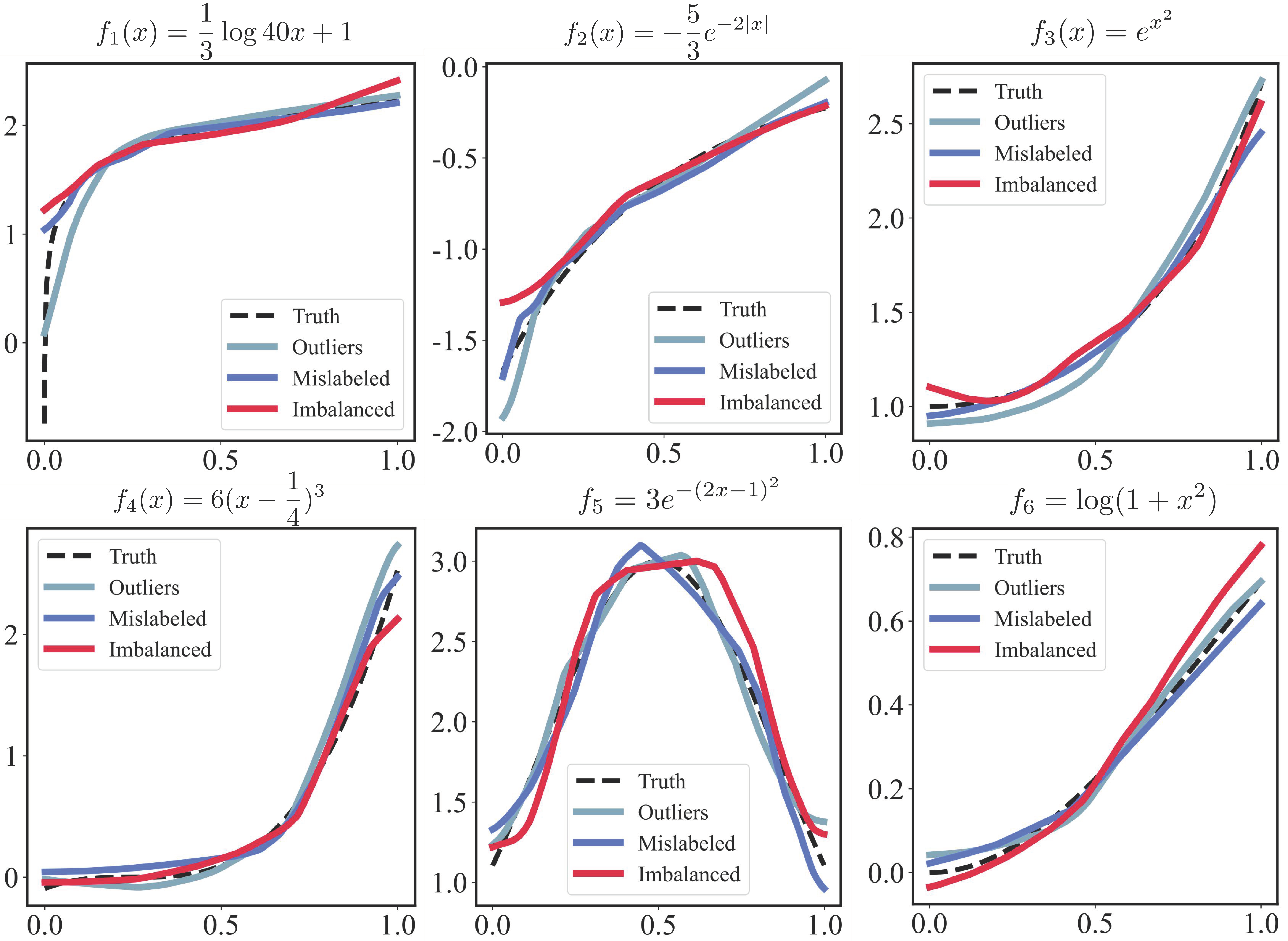

S2MAM: Semi-supervised Meta Additive Model for Robust Estimation and Variable Selection

Xuelin Zhang, Hong Chen*, Yingjie Wang, Tieliang Gong, Bin Gu

Forty-Third International Conference on Machine Learning 2026 [C]

- This paper introduces the Semi-Supervised Meta Additive Model (S2MAM) to overcome the vulnerability of traditional manifold regularization to noisy data by employing a bilevel optimization scheme that automatically selects informative variables and dynamically updates the similarity matrix.

- Supported by theoretical guarantees for convergence and generalization, extensive experiments confirm that the model achieves robust and interpretable predictions across diverse corrupted datasets.

- Implementation: Github Link

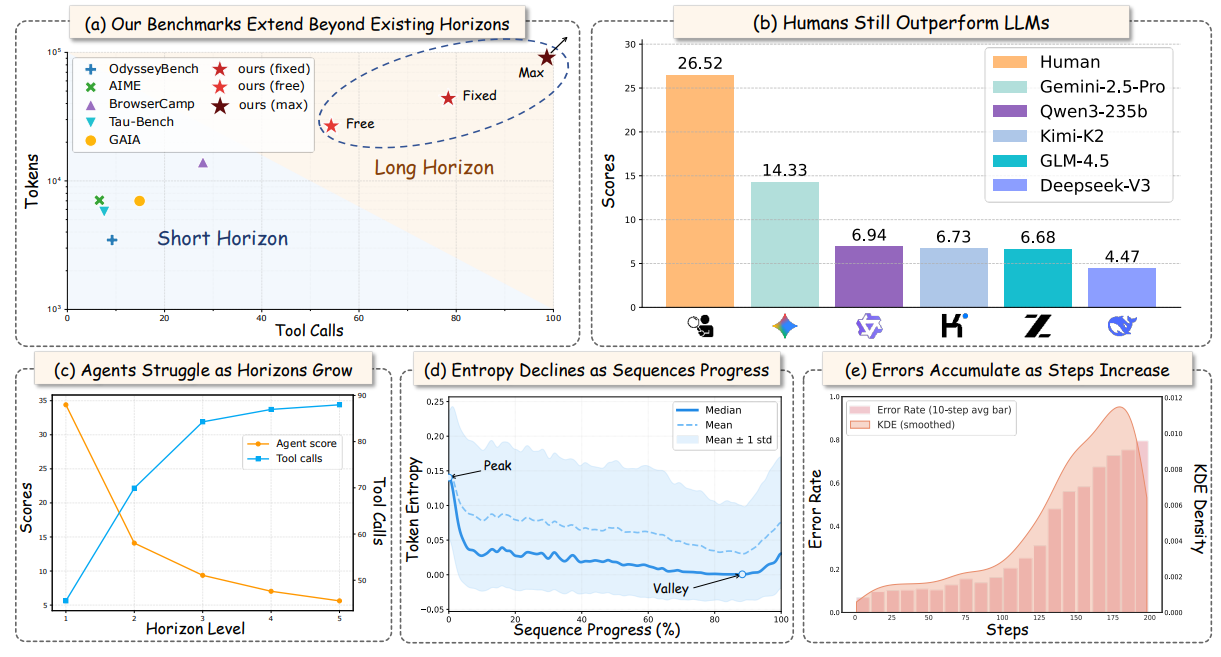

UltraHorizon: Benchmarking LLM-Agent Capabilities in Ultra Long-Horizon Scenarios

Haotian Luo#, Huaisong Zhang#, Xuelin Zhang#, Haoyu Wang#, Zeyu Qin#, WenJie Lu#, Guozheng Ma, Haiying He, Yingsha Xie, Qiyang Zhou, Zixuan Hu, Hongze Mi, Yibo Wang, Naiqiang Tan, Hong Chen, Yi R. Fung, Chun Yuan, Li Shen*

Forty-Third International Conference on Machine Learning 2026 [C]

- Existing evaluations for autonomous agents typically fail to capture the complexity of long-horizon, partially observable real-world tasks that require sustained reasoning, memory management, and tool use. To address this gap, we introduce a novel exploration-based benchmark across three environments, featuring extremely long agent trajectories with high token counts and frequent tool calls.

- Extensive experiments reveal that current state-of-the-art agents significantly underperform compared to humans and fail to improve with simple scaling, primarily due to in-context locking and fundamental capability gaps.

- Implementation: Github Link

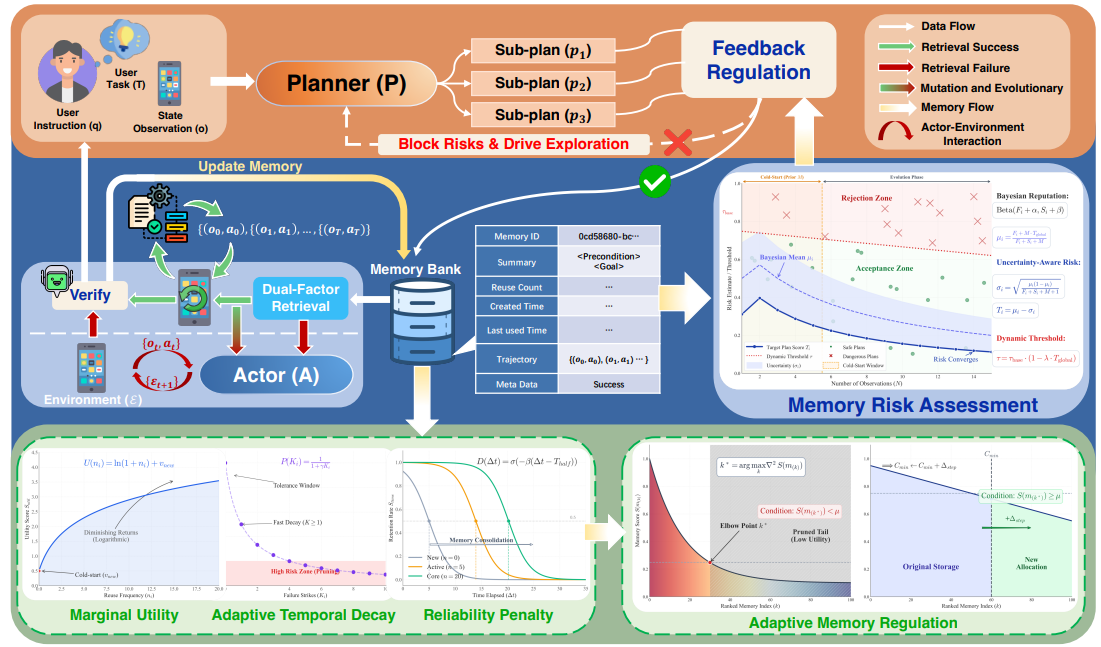

Darwinian Memory: A Training-Free Self-Regulating Memory System for GUI Agent Evolution

Hongze Mi, Yibo Feng, WenJie Lu, Song Cao, Jinyuan Li, Yanming Li, Xuelin Zhang, Haotian Luo, Songyang Peng, He Cui, Tengfei Tian, Jun Fang, Hua Chai, Naiqiang Tan*.

Forty-Third International Conference on Machine Learning 2026 [C]

- To overcome the limitations of current memory systems in complex GUI automation, the proposed Darwinian Memory System (DMS) uses a self-evolving, “survival of the fittest” approach to dynamically refine strategies by retaining successful actions and pruning suboptimal ones.

- Extensive testing demonstrates that DMS significantly improves the success rate, execution stability, and speed of general-purpose Multimodal Large Language Models without requiring additional training overhead.

- Implementation: Github Link

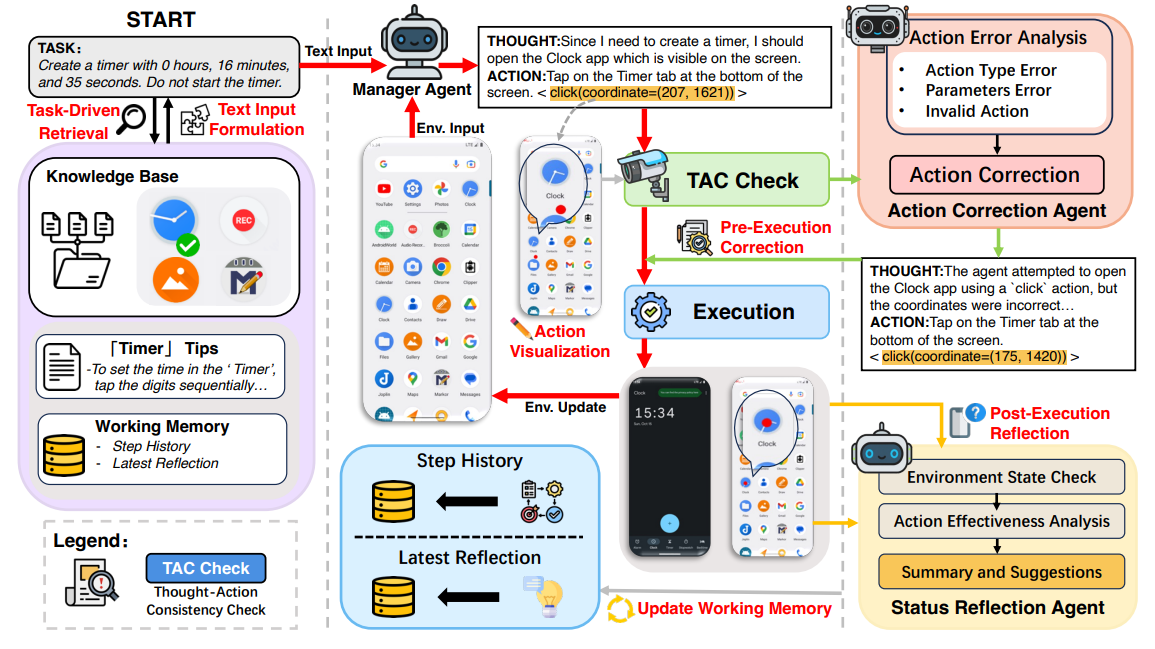

D-Artemis: A Deliberative Cognitive Framework for Mobile GUI Multi-Agents

Hongze Mi, Yibo Feng, WenJie Lu, Yuqi Wang, Jinyuan Li, Song Cao, He Cui, Tengfei Tian, Xuelin Zhang, Haotian Luo, Di Sun, Jun Fang, Hua Chai, Naiqiang Tan, Gang Pan.

Annual Meeting of the Association for Computational Linguistics 2026 [C]

- Proposing the D-Artemis deliberative framework, which enhances general-purpose multimodal large language models for GUI tasks without requiring training on complex trajectory datasets, by leveraging a fine-grained, app-specific tip retrieval mechanism.

- Designing a cognitive closed-loop mechanism of “pre-execution alignment and post-execution reflection,” where the collaborative operation of the TAC Check module, Action Correction Agent, and Status Reflection Agent proactively prevents execution failures and enables experiential learning.

- Implementation: Github Link

Distribution-Aware Neural Additive Models: Robust Interpretable Deep Learning with Feature Selection

Jingyi Chen, Xuelin Zhang, Peipei Yuan*, Liyuan Liu, Hong Chen.

International Conference on Acoustics, Speech, and Signal Processing 2026 [C]

- Proposing a novel Distribution-Aware Neural Additive Model integrating kernel density estimation to flexibly capture diverse noise structures without restrictive distributional assumptions.

- Incorporating group sparsity regularization to automatically select the most informative input features and enhance model interpretability as well as predictive performance.

- The implementation could be found at Github Link

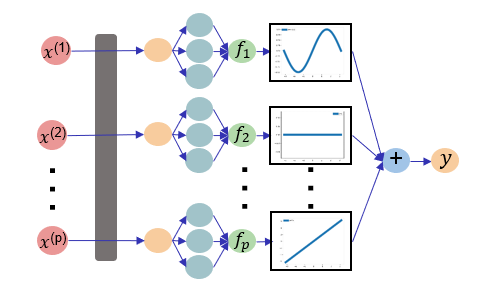

Maximum Likelihood Neural Additive Models

Jingyi Chen#, Xuelin Zhang#, Peipei Yuan, Rushi Lan, Hong Chen*.

Information Sciences 2026 [J]

- Algorithmically, we propose the Maximum Likelihood Neural Additive Model (ML-NAM), which employs kernel density estimation for non-parametric residual modeling to achieve robust learning against non-Gaussian noise without distributional assumptions.

- Theoretically, we establish non-asymptotic upper bounds on the excess risk, demonstrating that the model achieves minimax convergence rates with polynomial decay within Besov spaces.

- The implementation could be found at Github Link

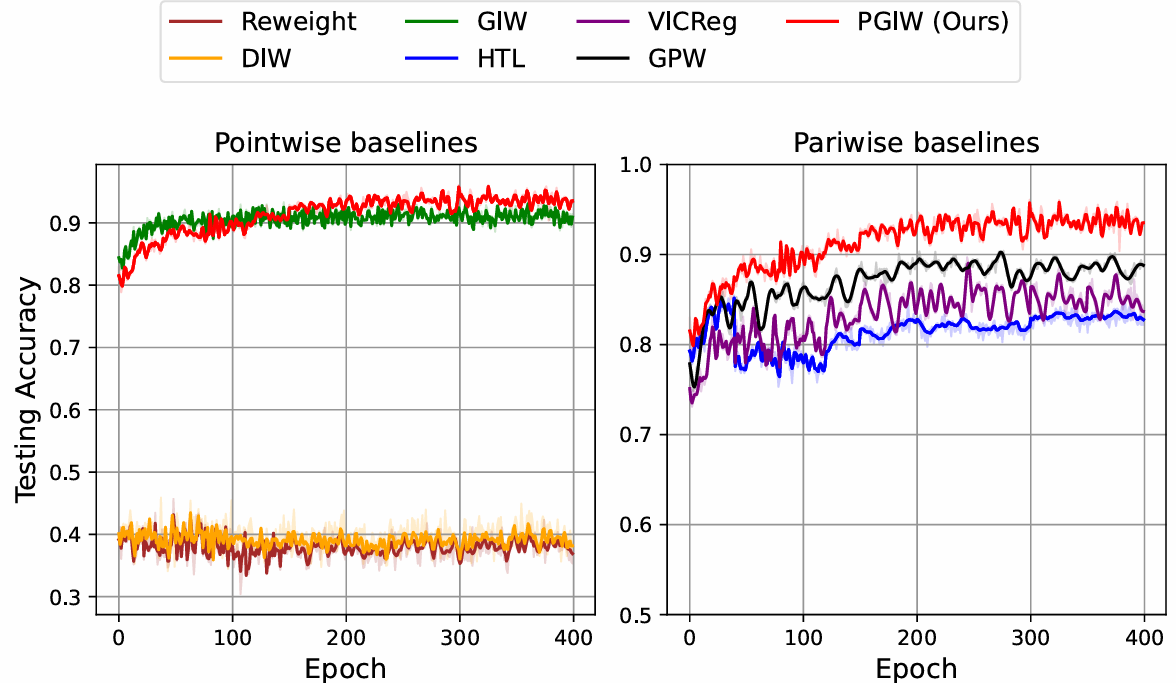

Pairwise Generalized Importance Weighting for Metric Learning under Distribution Shift

Richeng Zhou, Xuelin Zhang*, Hong Chen, Weifu Li, Liyuan Liu.

IEEE International Conference on Parallel and Distributed Systems 2025 [C] (Best Presentation in Session)

- Introduces an innovative solution to metric learning, effectively overcoming performance degradation under distributional shifts.

- Identifies existing algorithms’ limitations and proves risk consistency across broad distributional changes, offering strong theoretical guarantees.

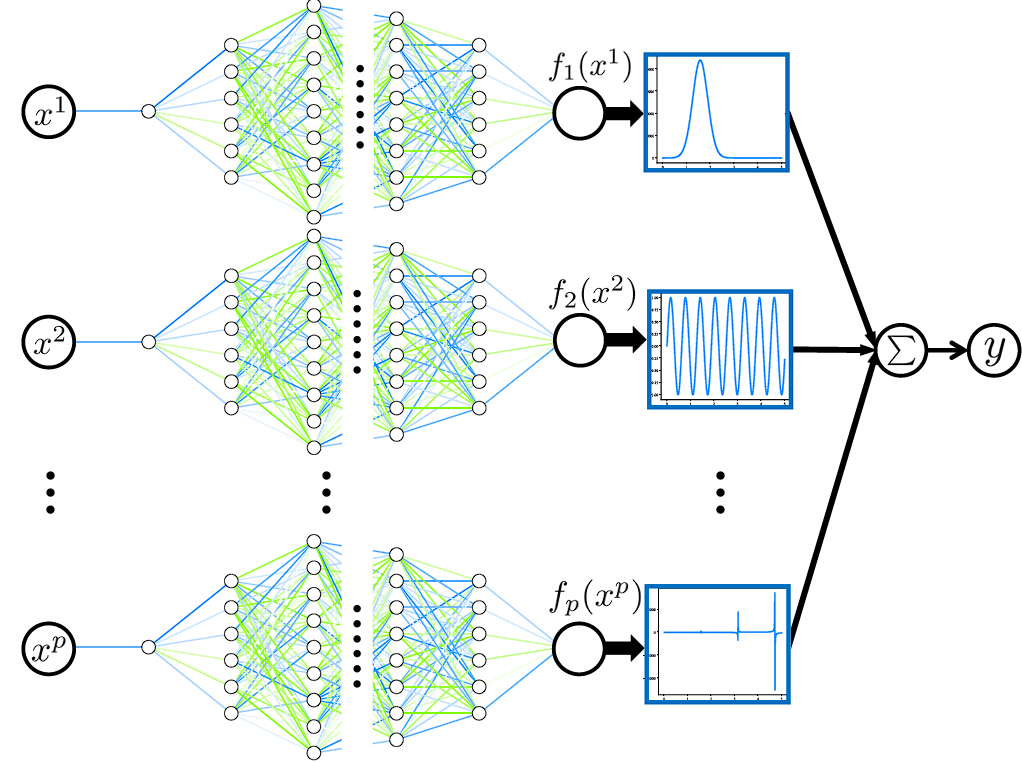

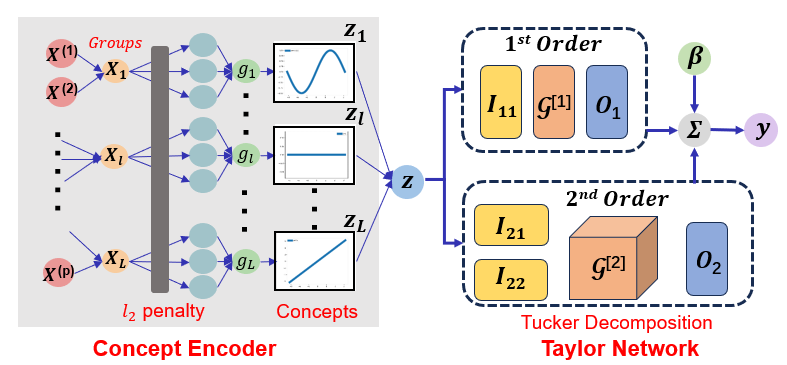

Interpretable Bilevel Additive Taylor Model

Wenxing Zhou, Chao Xu, Lian Peng, Xuelin Zhang*.

IEEE International Conference on Parallel and Distributed Systems 2025 [C] (Best Presentation in Session)

- Tucker decomposition is introduced for low-rank approximation by leveraging Taylor-series expansion to capture higher-order interactions.

- Fusing bilevel optimization with sparse neural additive models and integrated Tucker decomposition, it robustly handles label noise and class imbalance while preserving interpretability.

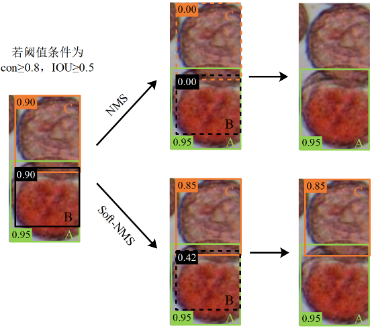

Citrus Pollen Viability Detection via Modified YOLOv11-FS Model

Liyuan Liu, Xuelin Zhang, Hong Chen, Weifu Li, Jianhua Liao, Kaidong Xie, Xiaomeng Wu, Yaohui Chen*.

Journal of Huazhong Agricultural University 2025 [J]

- An improved YOLOv11-FS model is proposed that effectively overcomes detection challenges such as the small size, clumping tendency, and complex background of citrus pollen grains.

- It provides reliable technical support for the breeding of seedless citrus varieties and can also underpin automated pollen-viability monitoring and cultivar improvement in the intelligent management of citrus orchards.

Interpretable Meta-weighted Sparse Neural Additive Networks

Xuelin Zhang, Hong Chen, Lingjuan Wu*.

ACM Conference on Information and Knowledge Management 2025 [C]

- Under a bi-level optimization scheme, we jointly learn adaptive sample weights and impose sparsity on a neural additive model’s univariate functions, yielding both continual robustness and single-feature interpretability; experiments across multiple distribution shifts confirm markedly superior performance and strong resistance to forgetting.

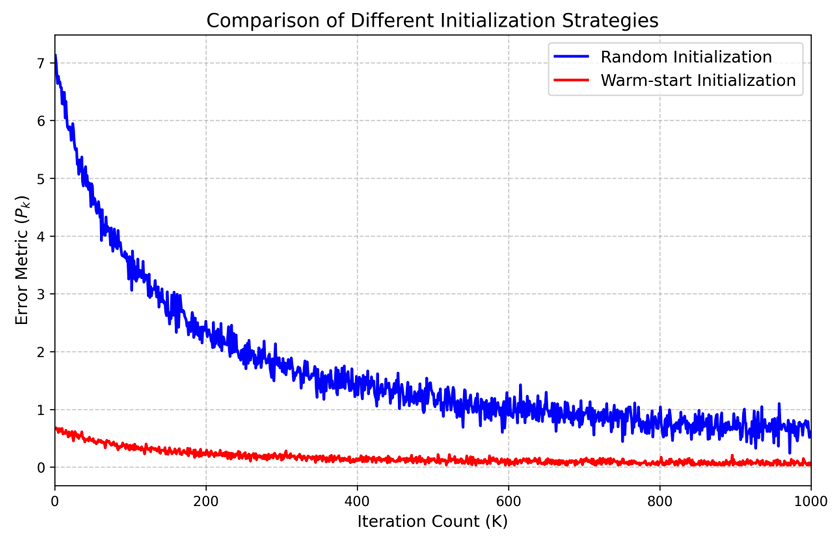

On the Convergence of Nonconcave-Nonconvex Max-Min Optimization Problem

Xuelin Zhang*

Journal of Numerical Simulations in Physics and Mathematics 2025 [J]

- This paper introduces a novel two-sided Polyak-Łojasiewicz and Quadratic Growth framework that establishes the convergence guarantee of O(1/K) for SAGDA in nonconvex-nonconcave max-min optimization, matching convex-concave rates under milder geometry.

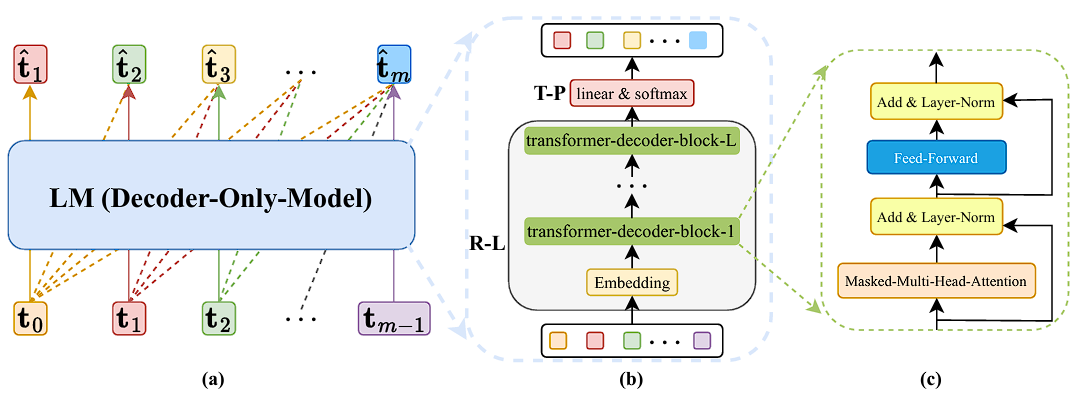

On the Generalization Ability of Next-Token-Prediction Pretraining

Zhihao Li, Xue Jiang, Liyuan Liu, Xuelin Zhang, Hong Chen and Feng Zheng.

International Conference on Machine Learning 2025 [C]

- This study establishes a theoretical framework for Next-Token-Prediction (NTP) pre-training based on Rademacher complexity, introduces a novel decomposition method, and provides the first generalization bounds for NTP. The findings offer valuable insights into how model parameters influence generalization and have been empirically validated, advancing both the theoretical comprehension and practical application of large language models.

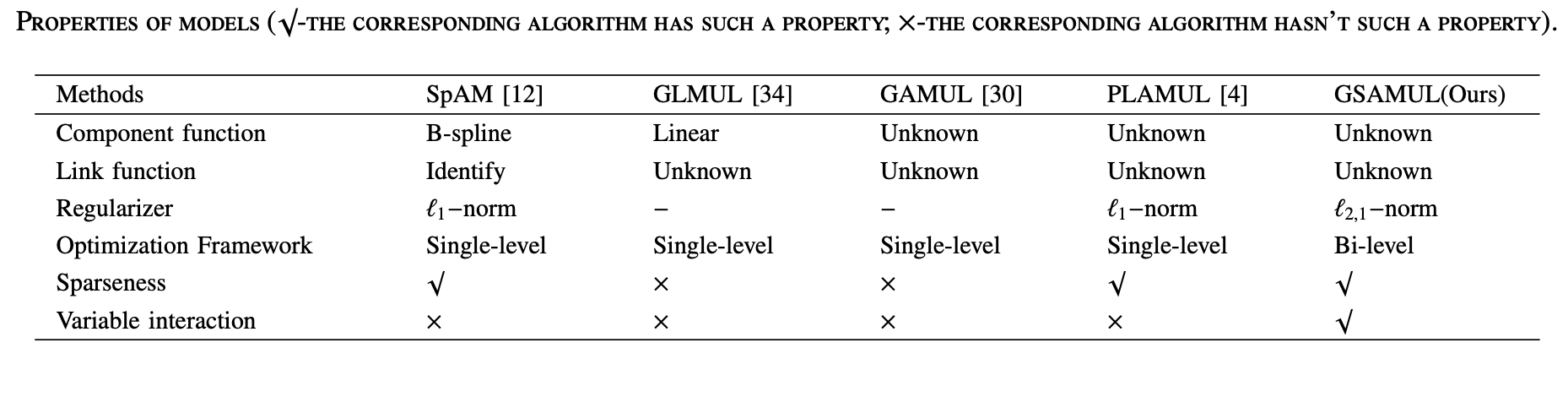

Generalized Sparse Additive Model with Unknown Link Function

Peipei Yuan, Xinge You*, Hong Chen, Xuelin Zhang, and Qinmu Peng.

IEEE International Conference on Data Mining 2024 [C]

- In this work, we propose a novel generalized additive model with a flexible link function automatically learned by a bilevel scheme. The proposed model is capable of nonlinear approximation, hidden interaction and feature selection, which also enjoys the theoretical guarantee of algorithmic convergence.

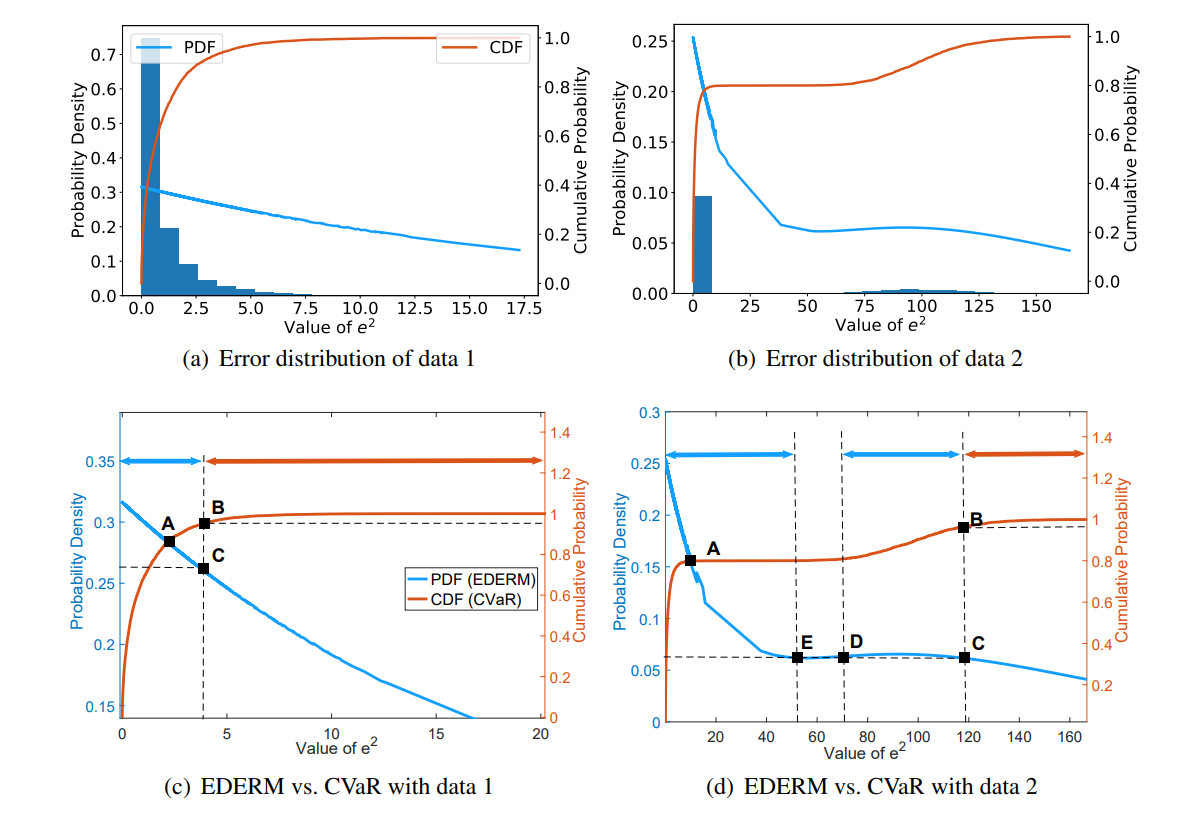

Error Density-dependent Empirical Risk Minimization

Hong Chen*, Xuelin Zhang, Tieliang Gong, Bin Gu, Feng Zheng.

Expert Systems With Applications 2024 [J]

- This paper goes beyond the limitation of error value-dependent learning criterion and proposes the EDERM framework for robust regression against atypical data. Sufficient empirical evaluations validate the effectiveness of our method.

- The implementation codes can be found at: Github Link

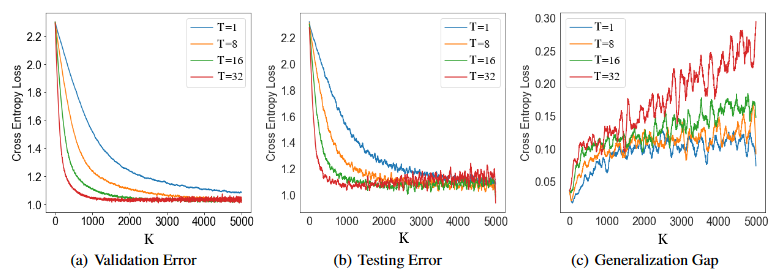

Fine-grained Analysis of Stability and Generalization for Stochastic Bilevel Optimization

Xuelin Zhang, Hong Chen*, Bin Gu, Tieliang Gong, Feng Zheng.

International Joint Conference on Artificial Intelligence 2024 [C] (Oral)

- In this paper, we provide a systematic generalization analysis of the first-order gradient-based bilevel optimization methods, based on the (on-average argument) algorithmic stability technique.

- The verification codes are provided at: Github Link

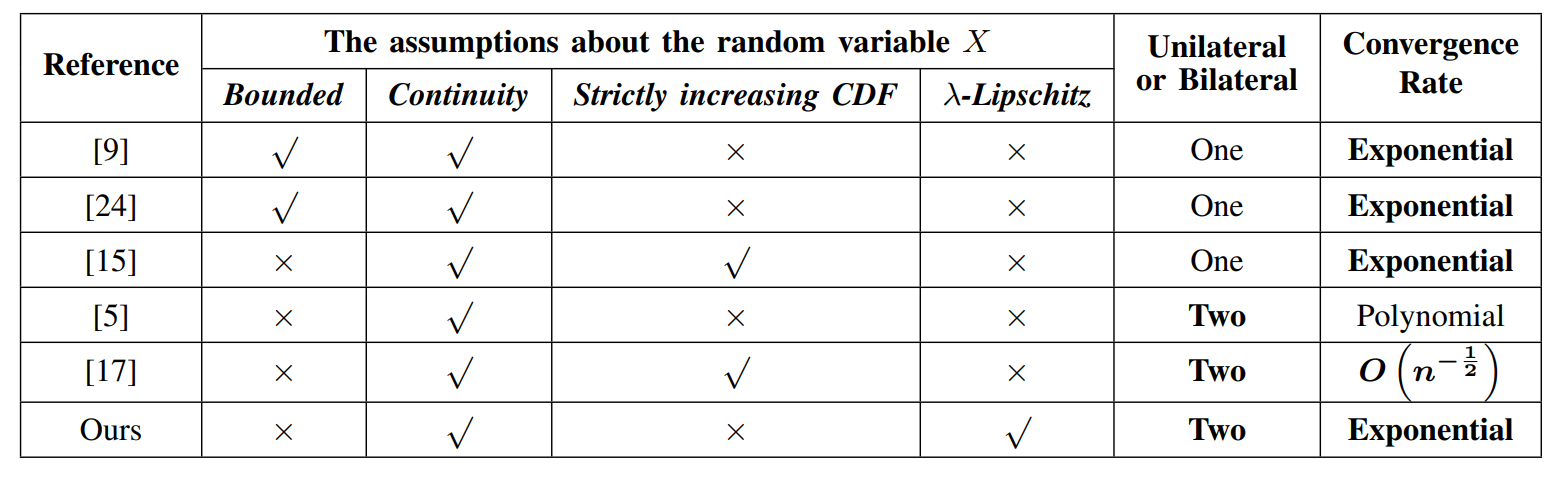

Improved Concentration Bound for CVaR

Peng Sima, Hao Deng*, Xuelin Zhang, Hong Chen.

International Joint Conference on Neural Networks 2024 [C]

- This paper introduces a novel estimator that relies on an estimator of Value at Risk (VaR) and investigates the concentration inequalities in independent scenarios where the underlying distributions are sub-Gaussian, sub-exponential, or heavy-tailed, where the inequalities we derive are bilateral, exhibit exponential decay, and are not confined to bounded scenarios.

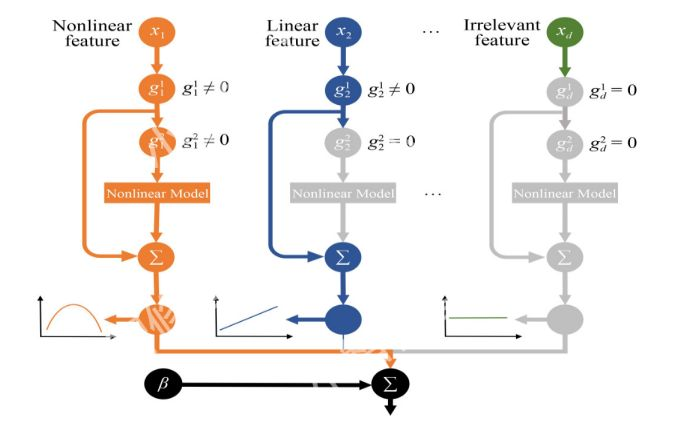

Neural Partially Linear Additive Model

Liangxuan Zhu, Han Li*, Xuelin Zhang, Lingjuan Wu, Hong Chen.

Frontiers of Computer Science 2023 [J]

- This paper proposes a Neural Partially Linear Additive Model (NPLAM), which automatically distinguishes insignificant, linear, and nonlinear features in neural networks, which can realize model-level interpretability.

Stepdown SLOPE for Controlled Feature Selection

Jingxuan Liang, Xuelin Zhang, Hong Chen*, Weifu Li, Xin Tang.

Association for the Advancement of Artificial Intelligence 2023 [C] (Oral)

- This paper goes beyond the previous concern of Sorted L-One Penalized Estimation (SLOPE) limited to the false discovery rate (FDR) control by considering the stepdown-based SLOPE in order to control the probability of k or more false rejections (k-FWER) and the false discovery proportion (FDP).

- The codes are shared at: Github Link

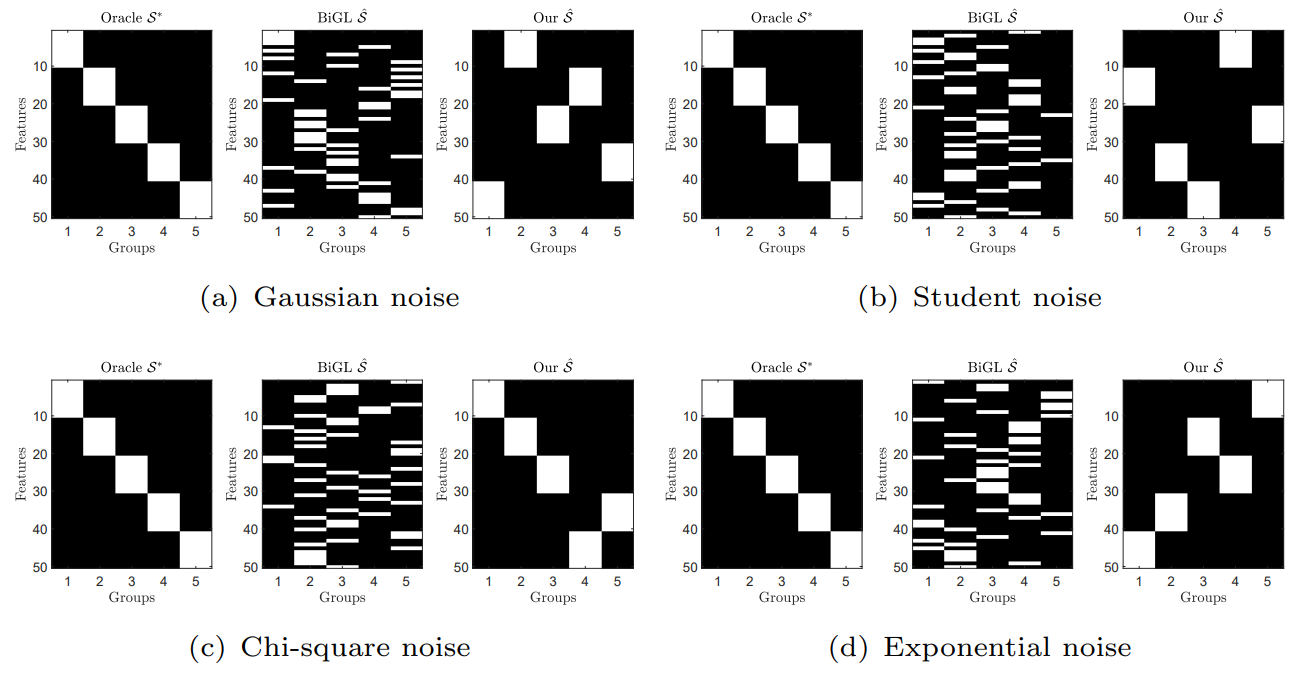

Robust variable structure discovery based on tilted empirical risk minimization

Xuelin Zhang, Yingjie Wang, Liangxuan Zhu, Hong Chen, Han Li, Lingjuan Wu*.

Applied Intelligence 2023 [J]

- In this paper, we propose a new robust variable structure discovery method for group lasso based on a convergent bilevel optimization framework, where the robust tilted empirical risk minimization is adopted.

- The implementation codes can be found at: Github Link

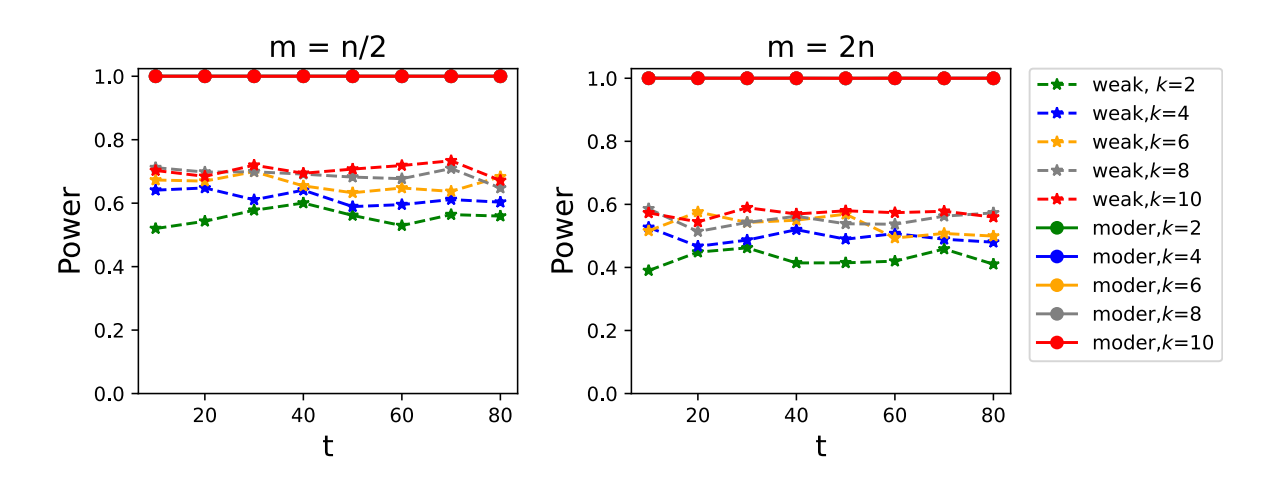

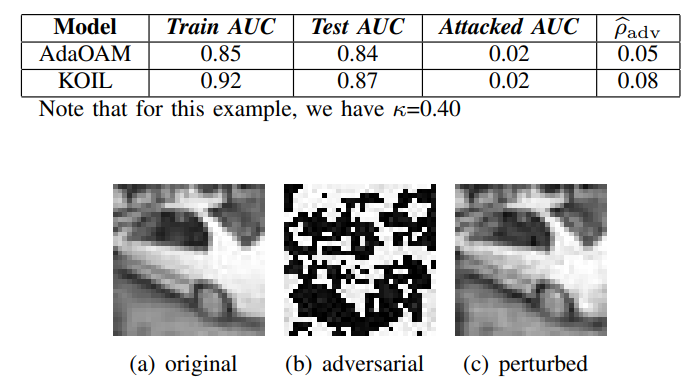

Robustness of classifier to adversarial examples under imbalanced data

Wenqian Zhao, Han Li, Lingjuan Wu*, Liangxuan Zhu, Xuelin Zhang, Yizhi Zhao.

International Conference on Computer and Communication Systems 2022 [C]

- In this paper, we provide a theoretical framework to analyze the robustness of a classifier to AE under an imbalanced dataset from the perspective of AUC (Area under the ROC curve), and derive an interpretable upper bound.

📝 Publications in Hardware Security

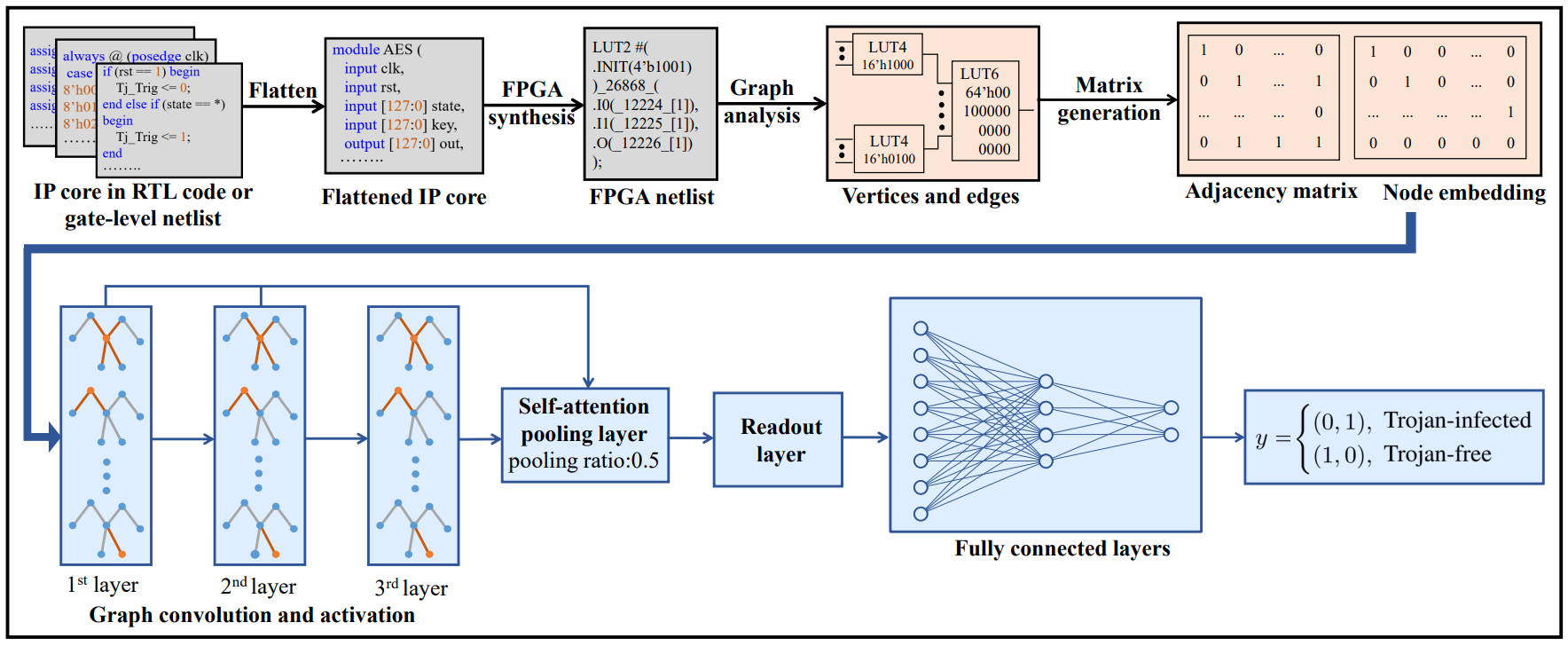

Explainable Hardware Trojan Detection and Localization in FPGA Netlists

Lingjuan Wu, Hao Su, Siyi Tian, Xuelin Zhang, Wei Hu*.

Computers & Security 2026 [J]

- proposing an explainable hardware Trojan detection and localization method based on graph neural networks, leveraging the initialization vector features of look-up tables (LUTs) in FPGA netlists

- introducing Granger causality theory, the method further reveals the decision-making mechanism of the GNN model to achieve precise identification of infected IP cores and Trojan nodes in Xilinx FPGA netlists

Towards Precise and Explainable Hardware Trojan Localization at LUT Level

Hao Su, Wei Hu, Xuelin Zhang, Dan Zhu, Lingjuan Wu*.

IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2025 [J]

- The proposed approach aims to extract the rich structural and behavioral features at the look-up-table (LUT) level to train an explainable graph neural network (GNN) model for classifying design nodes in FPGA netlists and identifying the Trojan-infected ones.

- The implementation codes can be found at: Github Link

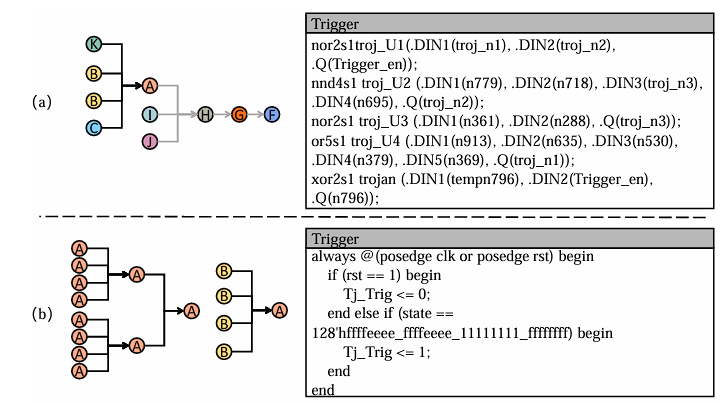

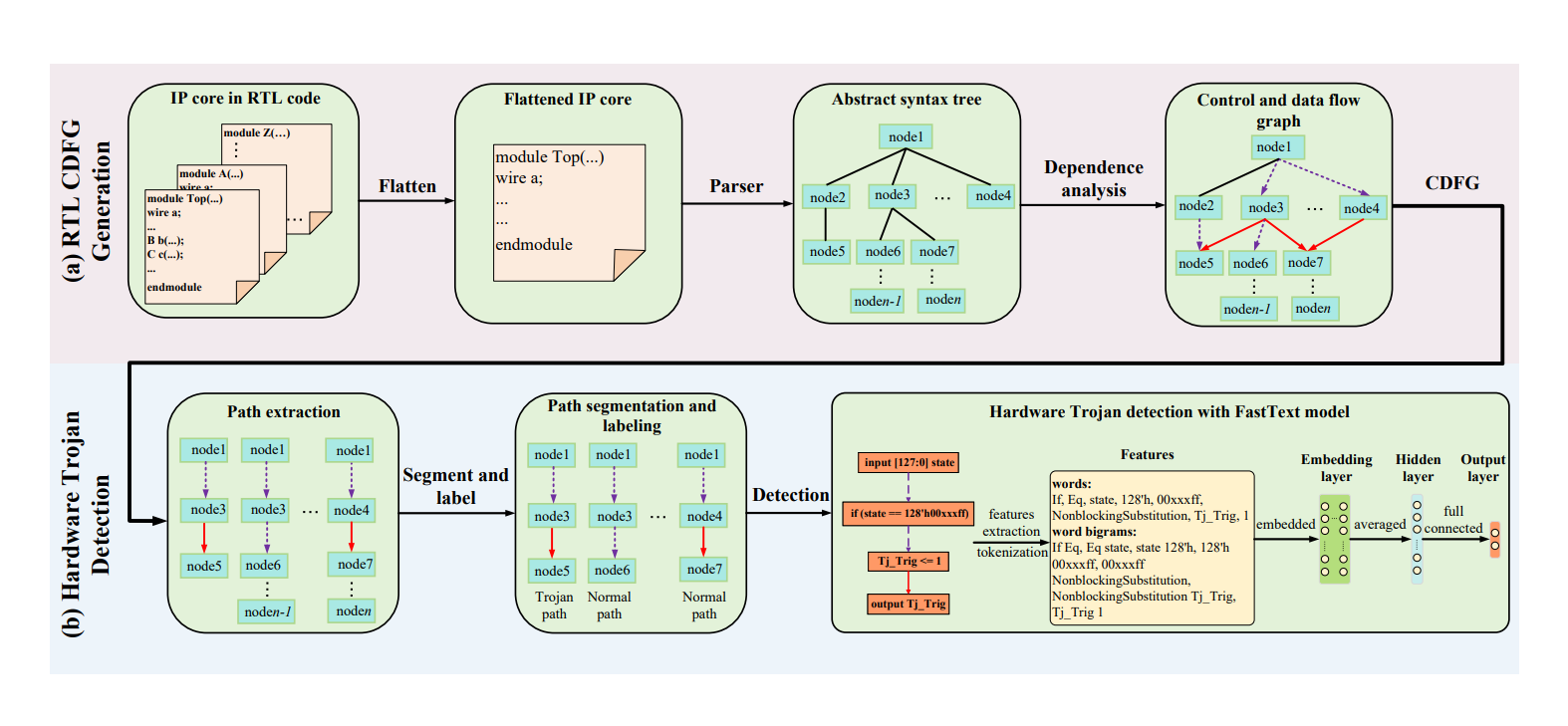

Pinpointing Hardware Trojans Through Semantic Feature Extraction and Natural Language Processing

Yichen Li, Wei Hu, Hao Su, Xuelin Zhang, Yizhi Zhao, Lingjuan Wu*.

International Test Conference in Asia 2024 [C]

- In this work, we propose a novel hardware Trojan detection method at RTL. Our approach involves the transformation of hardware design into CDFG, followed by path extraction and segmentation.

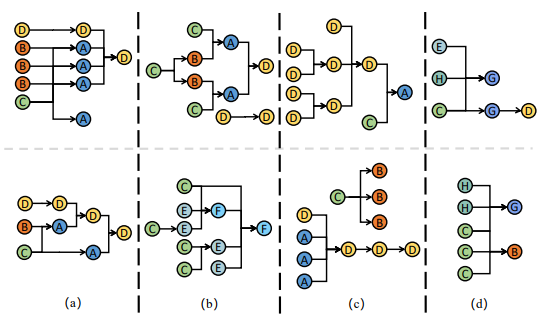

Automated Hardware Trojan Detection at LUT Using Explainable Graph Neural Networks

Lingjuan Wu, Hao Su, Xuelin Zhang, Yu Tai, Han Li, Wei Hu*.

International Conference on Computer-Aided Design 2023 [C]

- In this work, we propose a novel hardware Trojan detection method based on graph neural networks (GNNs) targeting FPGA netlists. We leverage the rich explicit structural features and behavioral characteristics at LUT, which offer an ideal abstraction level and granularity for Trojan detection. A GNN model with optimized class-balanced focal loss is trained for automated Trojan feature extraction and classification.

- Model implementation is available at Github Link

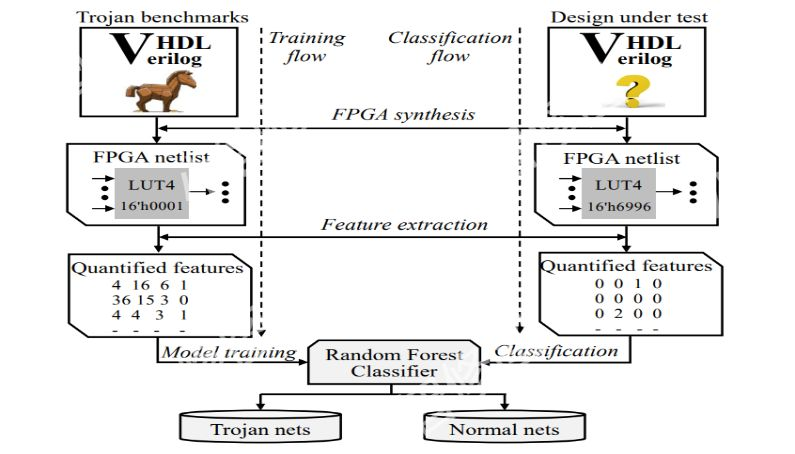

Hardware Trojan Detection at LUT: Where Structural Features Meet Behavioral Characteristics

Lingjuan Wu, Xuelin Zhang, Siyi Wang, Wei Hu.

International Symposium on Hardware Oriented Security and Trust 2022 [C]

- This work proposes a novel hardware Trojan detection method that leverages static structural features and behavioral characteristics in field programmable gate array (FPGA) netlists. Mapping of hardware design sources to look-up-table (LUT) networks makes these features explicit, allowing automated feature extraction and further effective Trojan detection through machine learning.

- The implemented codes are available at: Github Link

🎖️ Activities and Honors

- 2025: Internship at DiDi AI-Lab: L-Lab.

- 2025.12: A report is made at 31th IEEE International Conference on Parallel and Distributed Systems 2025..

- 2025.11: A report is made at 34th ACM Conference on Information and Knowledge Management 2025.

- 2025.4: A report is made at HBSIAM 2025.

- 2024.12: A report is made at CCF Wuhan 2024 Annual Conference and 8th Outstanding Doctoral Student Academic Forum.

- 2024.11: China Doctoral National Scholarship.

- 2024.11: A poster is presented at CSIAM 2024.

- 2024.8: A report is made at Jeju, South Korea. Conference of IJCAI-2024.

- 2024.4: A report is made at HBSIAM 2024.

- 2024: Internship at Sun Yat-sen University.

🛠️ Authorized Patents

- 2024.06: Hong Chen, Xuelin Zhang, Weifu Li, Feng Zheng. CN114580299A.

💬 Academic Services

-

Conference reviewer for ICLR, ICML, NeurIPS, CVPR, AAAI, AISTAT, IJCNN, ACML and their Findings/Workshops.

-

Journal reviewer for Artificial Intelligence, Machine Learning, Expert Systems With Applications, Discover Analytics, Statistics and Computing, International Journal of Applied and Computational Mathematics, International Journal of Data Science and Analytics, Journal of Infrastructure, Policy and Development, Molecular & Cellular Biomechanics, Journal of Biomedical Research .